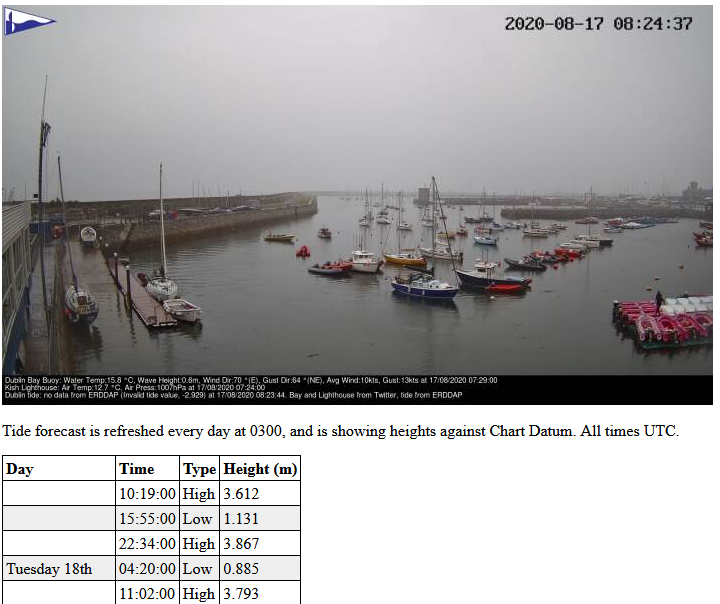

The DMYC has an IP camera pointed at the slip; the URL is very creative, it’s slipcam.dmyc.ie. Through the power of a Python script that knows how to talk to Twitter and another API, the image that gets sent to a browser has an overlay at the bottom with the current wind data from the Dublin Bay Buoy, the air pressure from the Kish Lighthouse, and the current state of tide from Dublin Port.

Recently, the Dublin Port data started saying that the tide was negative metres – this is technically possible if you consider the current tide relative to say mean high water, or even mean sea level, but according to the documentation for ERDDAP, it should be LAT; lowest astronomical tide. This prompted me to go looking for API services that would provide me with tide data, and preferably not at a cost that breaks the bank.

Enter worldtides.info. They provide an API that can return half-hourly tide height predictions, tide extremes, a graphic of the tide – all for up to 7 days in advance, and against a datum you specify, be it Lowest Astronomical Tide, Chart Datum, or Mean High Water (and more). They give you 100 credits worth of queries to get started, and then the price can drop as low as USD 5c/credit – basic queries consume 1 credit. Once I had it all working, I forked over 10 USD for 20,000 credits. At 2 credits per day (since I make two queries), I think that’ll be enough for a few decades.

For the slipcam, I wrote two bits of PHP. One runs daily from a cron job, fetching data from the API, and writing it to disk – this gives me a cache, because I’m willing to only fetch the tidal extremes (high/low) once a day for the coming 7 days and 2 days – the 7 day data is for the Slipcam page, the 2 day data is for the main website. The other bit of PHP replaced the static index.html on the slipcam site, and adds the ability to load the JSON data from the cache file, parse it, and spit out a table of forecasted tide heights. A bit of CSS to make it look slightly neater, and job’s a good’un.

For the main website, I found the JSON Content Importer plugin, which can read a JSON file from a URL (but not file://), and has a very basic processing language you can use without buying the pro version. The footer now has the next 2 days of tide data displayed.

The biggest pain was that the hosting control panel said use “/usr/local/bin/php”, but the actual executable was at “/usr/bin/php”. Had to write a shell script that stuck the output of “which php” into a text file so I could find out where php was.